Agentic memory: what agents should and shouldn't remember

Conversation state and retrieved context lead naturally into memory, but only if we're clear about what memory is for. Rules, skills, and instruction files package what you already know. Memory should capture what the work itself teaches the system, and that means reflection, verification, and forgetting matter just as much as recall.

Part of: Context Engineering · Post 3 of 3 ›

- 01 Context engineering: more context isn't better context

- 02 AGENTS.md and SKILL.md: building a reusable agent toolbox

- 03 Agentic memory: what agents should and shouldn't remember

While building some of my own AI-agent based projects, I’ve run into the same frustrating loop. An agent would uncover something useful in the work itself (a hidden dependency, an awkward repo rule, a dead end I’d already ruled out), and then forget it all in the next session. I’d steer it back on course, start fresh later, and watch it head straight for the same mistake again. All that earlier momentum had gone.

Sometimes the fix is building better up-front context through files like AGENTS.md or SKILL.md. But sometimes the agent learns something useful in the work itself, but that learning doesn’t survive into the next session.

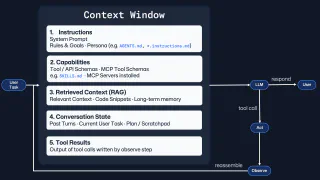

In my first post on context engineering, I argued that more context isn’t better context. In the second post on building a reusable agent toolbox, I looked at the files and structures we use to package what we already know: AGENTS.md, SKILL.md, Copilot instruction files, prompt files, and specialist agents.

This post moves to the next layer: what the work itself teaches the system while it’s happening: memory, the layer of agentic infrastructure that I’m most excited about right now.

In practice, agentic memory is the repo-specific knowledge an agent learns from doing the work, not the guidance you gave it before the work began.

Agentic memory and instructions aren’t the same thing

Copilot custom instructions, Cursor Rules, AGENTS.md and other similar concepts are instruction layers, not memory. They all do the same three things:

- Package the guidance up front

- Tell the agent what you already know

- Exist before the task begins

If you write an AGENTS.md file that says “run pnpm test before you finish”, “use our design system components”, or “never add dependencies without asking”, you’ve created an instruction. If you write a SKILL.md file that says “here is how to turn a mock-up into a component”, you’ve created a reusable procedure or capability.

Those things are incredibly useful, as we learned in my previous post. But they’re different to memory; memory is when the work teaches the agent something new. At least, that’s the mental model I’ve been using - others might draw the boundary differently because the language in this space is still settling:

| Layer | Exists before the task? | What it captures | Example |

|---|---|---|---|

| Instructions | Yes | Stable guidance you want available from the start | AGENTS.md, Copilot instruction files, Cursor Rules |

| Skills | Yes, but activated when relevant | Reusable capabilities and procedures for a class of work | SKILL.md, specialist agents |

| Agentic memory | No | Specific, evolving knowledge uncovered while doing the work | Hidden dependencies, synced files, learned steps |

Here’s that distinction in the same running example from the previous post.

What belongs where?

Imagine you’re building a notification preferences screen in a web app and have an agent helping you with the work:

AGENTS.mdor.github/copilot-instructions.mdsets the repo context: use React 18, TypeScript, Tailwind, existing design system primitives, and run the linter and tests before finishing.- A

SKILL.mdprovides specialised capabilities: when building a settings form, map fields to existing components first, preserve accessibility semantics, test edge states, and validate keyboard support. - A memory contains learned experiences: changes to notification preferences also require an update in

src/lib/audit-events.ts, and integration tests for this flow needpnpm seed:test-notificationsfirst.

That memory didn’t exist before the work started. The task uncovered a hidden dependency and a non-obvious test-data requirement. Memory should capture what instructions can’t: the specific, evolving knowledge that emerges from working in the codebase.

Chris, isn’t this just revealing the need for better documentation with a fancy label? Sometimes, yes - but that’s the point. If you had to write it down before the work started, it belongs in guidance. If the work taught it to you halfway through, that’s the bit memory needs to keep.

Think about it from our own perspective. We’re not born with instructions on how to complete specific tasks. Some of what we know comes from written guidance, but plenty of it comes from doing the work, making mistakes, and reflecting on what we wish we’d known at the start.

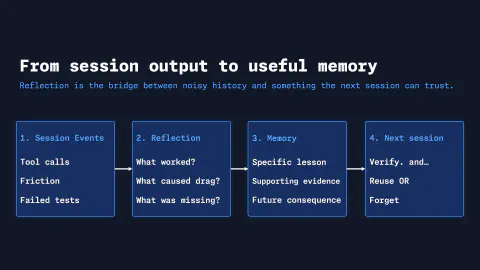

Reflection: from session friction to candidate memory

Even after you’ve shaped the context window and packaged stable knowledge into instructions, skills, and specialist roles, friction still shows up. The agent misses a hidden dependency, chases a dead end, or forgets a repo rule no one documented. At that point, the task is revealing knowledge you didn’t have at the start. That’s where memory enters the picture: lessons the work keeps generating that don’t fit neatly into up-front files.

By reflection, I mean looking back after the task and asking what it taught you. This is less about reasoning longer in the moment (e.g. extended thinking or higher reasoning modes), but more about capturing what the work revealed. Now the agent can reflect on:

- Where was the friction?

- What do I wish I had known at the start?

- Is there a lesson here that should survive into the next session?

Logging is not reflection, and reflection is not memory

These concepts may sound a little similar when we go through them too quickly, so let’s separate them out:

- Logs record what happened.

- Reflection interprets what happened from the logs.

- Memory preserves the reflections into actionable insights that are worth bringing back later.

Using the same example:

- A log says the agent ran

pnpm test, saw notification integration failures, searched the repo, and then ranpnpm seed:test-notifications. - A reflection says the workflow consistently stalls because the test data dependency isn’t obvious, and the agent should check for seeded fixtures early in notification work.

- A memory says: for notification preference integration tests, run

pnpm seed:test-notificationsbeforepnpm test, and verify the audit event mapping if preference values changed.

Hopefully that example contextualises the chain from previous activity, and how that translates into an abstract concept like agent memory.

That, for me, is where reflection starts to matter. It’s the mechanism that turns noisy session history into candidate memory:

- Without reflection, you just have logs, a record of what happened.

- Without verification, you just have opinions, a record of what you think happened.

- By not forgetting, you create clutter of records that you no longer need.

The logs matter. So does the judgement about what survives.

That hand-off (from session friction to potential learning, or memory) is something I’ve been exploring in Clawpilot, a personal project inspired by OpenClaw which I’m powering via the GitHub Copilot SDK. It’s early days, and not something I plan to publish. But it’s been useful for helping me learn and think through the concepts of reflection and agentic memory in practice, and to understand the types of information worth keeping across sessions.

Clawpilot uses three memory layers:

- Working memory keeps the current task live in the moment: the recent conversation, the immediate context, and the details the agent is actively using right now.

- Episodic memory keeps a durable record of what happened during the work, so past sessions, actions, and points of friction can be revisited later.

- Semantic memory keeps the lessons extracted from those episodes: distilled knowledge, reusable patterns, and facts worth carrying into future work.

The part that matters here is the hand-off between episodic and semantic memory: a distiller turns raw session events into structured knowledge.

// Simplified from my Clawpilot distiller

func (d *Distiller) DistillBatch(ctx context.Context) (DistillResult, error) {

episodes, err := d.memory.SearchEpisodes(ctx, "", d.batchSize)

// ...

facts, err := d.extractFactsFromEpisodes(ctx, episodes)

d.resolveAndStoreFacts(ctx, facts, &result)

d.enforceEpisodicRetention(ctx) // prune old raw events

return result, nil

}

Each extracted fact then passes through an evolution gate:

- Duplicates raise confidence

- Newer variants supersede older ones

- New facts start a fresh chain

That means a broad fact can later be replaced by something more specific without losing the trail of how it evolved, raw episodes expire after a retention window and distilled knowledge lasts. That’s not too different to human memory. As Daniel Schacter argues, human remembering is constructive rather than reproductive: we piece together fragments under the influence of what we know now, which is why memory can be useful and distorted.

OpenClaw’s memory architecture makes that separation explicit. It keeps durable memory in MEMORY.md, everyday context in date-stamped notes, and DREAMS.md as a human review surface. Its Dreaming system is the consolidation step: an opt-in background flow with Light, REM, and Deep phases that scores short-term signals and promotes only qualified candidates into long-term memory. I like this framing because it treats memory as an active consolidation step rather than a growing and passive archive, strengthening what matters and letting the less relevant details fade.

Stale agentic memory is just as problematic as stale instructions

If your AGENTS.md file still says to run an old build command that no longer exists, the agent wastes time. If your Copilot Instructions or Cursor Rules still describe an architecture you migrated away from, the agent starts from a false assumption. If a memory says “update the audit event mapping here” but that mapping moved last month, the agent can confidently do the wrong thing.

Same shape of problem, different storage layer. Stale memories can mislead an agent just as easily as wrong instructions can. This can be an easy trap to fall into, especially when you’re excited about building the capability of cross-session recall (as I’ve found first hand!)

That’s why I find GitHub Copilot Memory’s citation-based verification approach so interesting. The system doesn’t just retrieve a memory and trust it. It checks the cited code locations at the point of use. If the evidence no longer matches, the memory can be corrected, refreshed, or effectively ignored. Effectively, a built-in verification step is part of the memory system, which means the agent isn’t just recalling what it learned, but confirming that it still holds.

That’s a much healthier model than treating memory as a magical truth store. And it points to a bigger lesson: keeping instructions up to date and reviewing memories are the same kind of maintenance.

What current tools are actually doing with agentic memory

These systems aren’t identical, and they don’t all use the word ‘memory’ in exactly the same way. Still, the overlap is useful, because the shared pattern tells us more than the label does:

GitHub Copilot Memory

We introduced GitHub Copilot Memory briefly a little earlier; a repository-scoped, cross-agent memory system which is kept up to date through a citation-based verification system. I really like this pattern; Copilot Memory treats memory as something the agent verifies before use, not just something it carries forward and trusts is true by default. This is the difference between thinking of the behaviour of memory, compared to just persisting facts in an archive.

Claude Code: instructions plus auto memory

Claude Code makes the same distinction I’ve been highlighting throughout this post: CLAUDE.md files are instructions you write, alongside auto memory (the notes that Claude writes itself). Both load at the start of a session, but they serve different jobs. It reinforces the point running through this post: guidance (or instructions) and learned memory are related, but they are not the same layer.

Forgetting is a feature

Human memory has layers. The Atkinson-Shiffrin model is a way of describing that flow: sensory memory, short-term memory, and long-term memory. But the more useful lesson for agent builders is what happens after the moment itself. Human memory is selective. It consolidates, generalises and, most importantly, forgets. I believe that’s a better mental model for agent memory than a stale vault of facts:

- keep what keeps proving useful

- verify what might have changed

- drop what no longer helps

- let reflection turn repeated friction into reusable lessons

A practical starting point for agentic memory

On that point, if you’re building agentic systems and trying to make this useful in your own workflows, keep it simple.

1. Separate your layers

Ask three questions:

- What do we already know and want available from the start? Instructions and rules.

- What procedures should the agent reuse? Skills and specialist roles.

- What does the work keep teaching us that isn’t encoded anywhere yet? Memory.

2. Add a reflection step after meaningful work

At the end of a task or session, ask:

- Where did the agent lose time?

- What tool sequence worked best?

- What repo-specific fact was missing at the start?

- What nearly caused the wrong change?

- What should the next session know?

That’s how candidate memories appear.

3. Promote repeated lessons, not one-off trivia

The best memories are the ones with future consequence: The hidden dependency. The missing seed step. The approval gate that keeps flagging issues. The subtle convention that’s obvious to the team but invisible to the agent.

4. Review memories like you review instruction files

If a memory can influence future work, it deserves scrutiny:

- What evidence supports it?

- Does it still hold?

- Should it stay as a memory, or has it stabilised enough to transition into an instruction file?

Sometimes the end state of a good memory is that it stops being memory and becomes documented guidance, meaning the system learned something worth formalising.

Where this leaves the series

My first post was about shaping context, while the second was about packaging reusable knowledge. This post has been about what the work itself can teach the agent system. If I had to reduce the whole post to one line, it’d be this: Rules, skills, and instruction files tell the agent what you already know. Memory captures what the work teaches it next.

If you’ve been experimenting with this, especially around reflection and post-session learning, I’d be interested in learning what you’ve found. This is still a new and evolving area, and I’m sure there are patterns and pitfalls I haven’t seen yet! Drop a comment on the BlueSky thread below, or connect with me on LinkedIn to chat directly. If you’re building something interesting in this space, I’d also love to take a look through your GitHub repo!

Until the next post, bye for now!

Bluesky Interactions

Comments

Related Content

Context engineering: more context isn't better context

Better prompts help, but they're only part of the story. Context engineering is the craft of designing what an AI agent sees, when it sees it, and how that changes across the session. The goal isn't a bigger context window. It's a more effective one.

Rubber Duck Thursdays - Time to build!

GitHub

GitHubIn this live stream, we explore building a 3D tic-tac-toe visualization using Three.js and Copilot coding agent, demo MCP elicitation for gathering game preferences, and discuss the importance of context engineering when working with AI tools. We also cover GitHub changelog highlights including path-scoped custom instructions for Copilot code review and agents.md support.

Rubber Duck Thursdays - Let's build

GitHub

GitHubIn this stream, Chris catches up on several weeks of GitHub updates including the remote MCP server preview and Copilot coding agent for business users. The live coding session demonstrates adding internationalization to the Copilot Airways app using Copilot coding agent, custom VS Code chat modes for planning, and agent mode in Xcode for iOS development.