An interactive agentic AI mental model

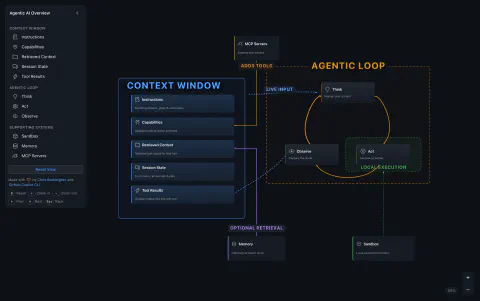

An interactive agentic AI mental model is an interactive guide to how an agentic AI system works. It brings together the moving parts that are easy to talk about separately, but harder to hold as one picture when you are building with agents.

The project walks through how instructions, capabilities, retrieved context, session state, memory, MCP servers, sandbox execution, tool results, and the Think-Act-Observe loop connect in practice.

Features

- Interactive mental model: Explore the core components of an agentic AI system in one connected view

- Context engineering focus: Understand how context windows, retrieved context, and session state shape agent behaviour

- Operational building blocks: See where memory, tool use, MCP servers, and sandbox execution fit into the overall system

- Practical framing: Use the Think-Act-Observe loop as a simple way to reason about how agents work in real workflows

Related Content

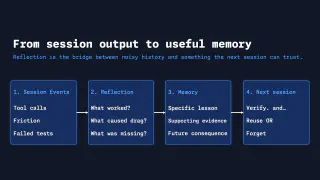

Agentic memory: what agents should and shouldn't remember

Conversation state and retrieved context lead naturally into memory, but only if we're clear about what memory is for. Rules, skills, and instruction files package what you already know. Memory should capture what the work itself teaches the system, and that means reflection, verification, and forgetting matter just as much as recall.

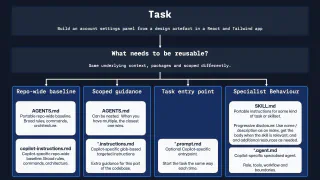

AGENTS.md and SKILL.md: building a reusable agent toolbox

Context engineering gets more useful when the knowledge it depends on is packaged for reuse. In this post I map the portable core (AGENTS.md and SKILL.md), Copilot-specific concepts like custom instructions, agents and prompt files, and how to decide what knowledge belongs where.

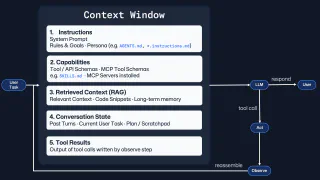

Context engineering: more context isn't better context

Better prompts help, but they're only part of the story. Context engineering is the craft of designing what an AI agent sees, when it sees it, and how that changes across the session. The goal isn't a bigger context window. It's a more effective one.